OCR Automation Workflow: Handwriting to Markdowns (Second Brain)

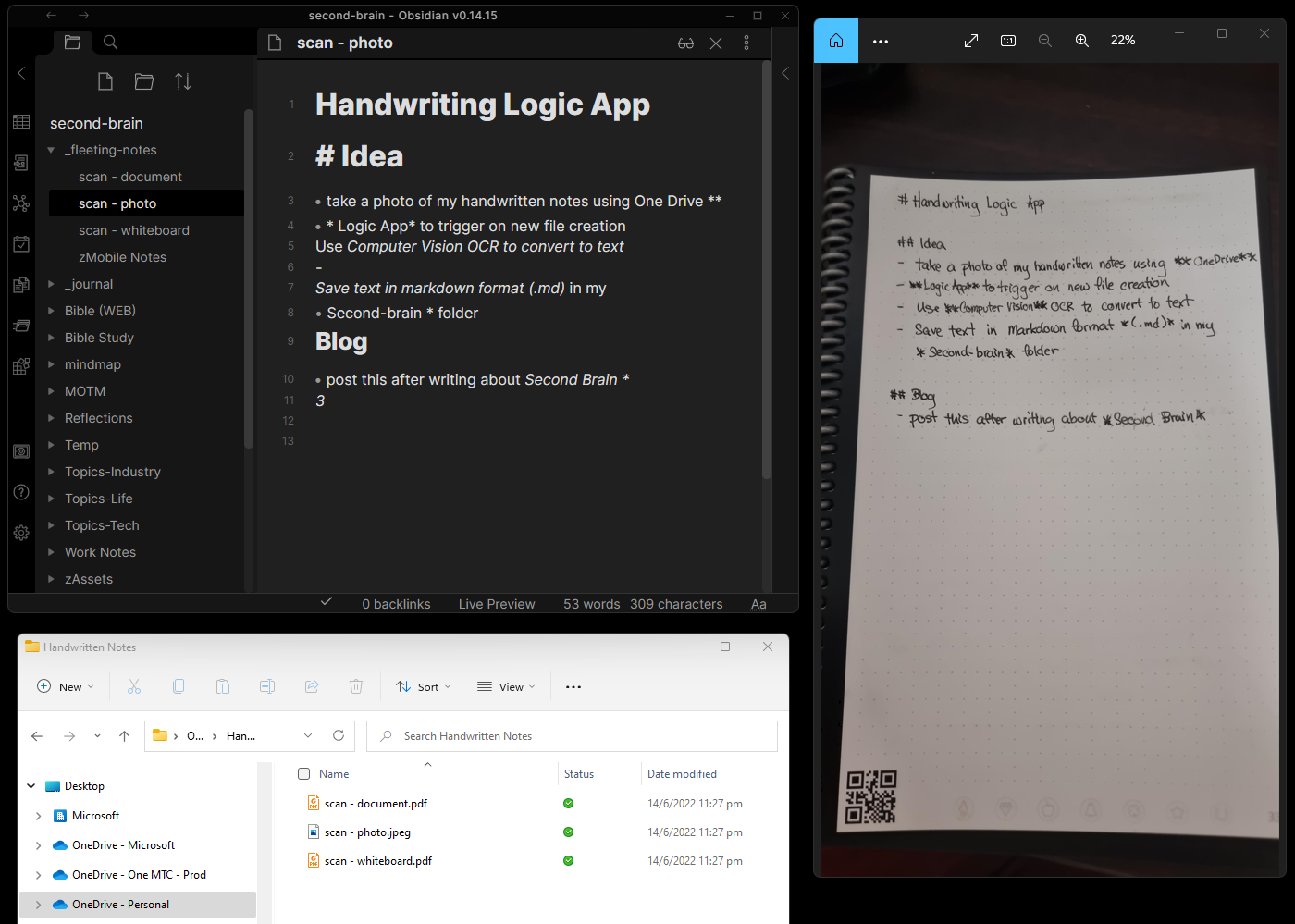

Yesterday, I posted about how I am building a Second Brain using Obsidian. In this post, I am sharing how I created an automated workflow that converts handwritten notes into markdown (*.md) and saved in my second brain.

Handwriting opens up the conversation

There are many articles that talk about the benefits of handwriting, but what triggered me to build this is how writing in my second brain required me to use either a laptop or my mobile phone – this is not a very pleasant social experience even when I’m simply using these devices to take notes in a meeting. As Jackie in Mr Iglesias put it, a laptop tends to create a barrier between you and the people you’re meeting.

Solution

Some time back, I posted an article on how I automated handwriting with markdowns which are reformatted and saved into OneNote. This solution is similar to where I am using the OneDrive mobile app to scan my notes and convert them into a markdown file using Microsoft Azure.

OneDrive

The OneDrive mobile app is my tool of choice because it makes document scanning very easy. You can use the camera to scan:

- Documents (or notes)

- Whiteboards

- Business Cards

- Photos

These images are then saved into a drop folder within my OneDrive account.

Azure Cognitive Services - Computer Vision API

Microsoft Azure includes a set of cognitive services making it easy to implement AI-infused apps. One of these services is a Computer Vision API with Optical Character Recognition capabilities.

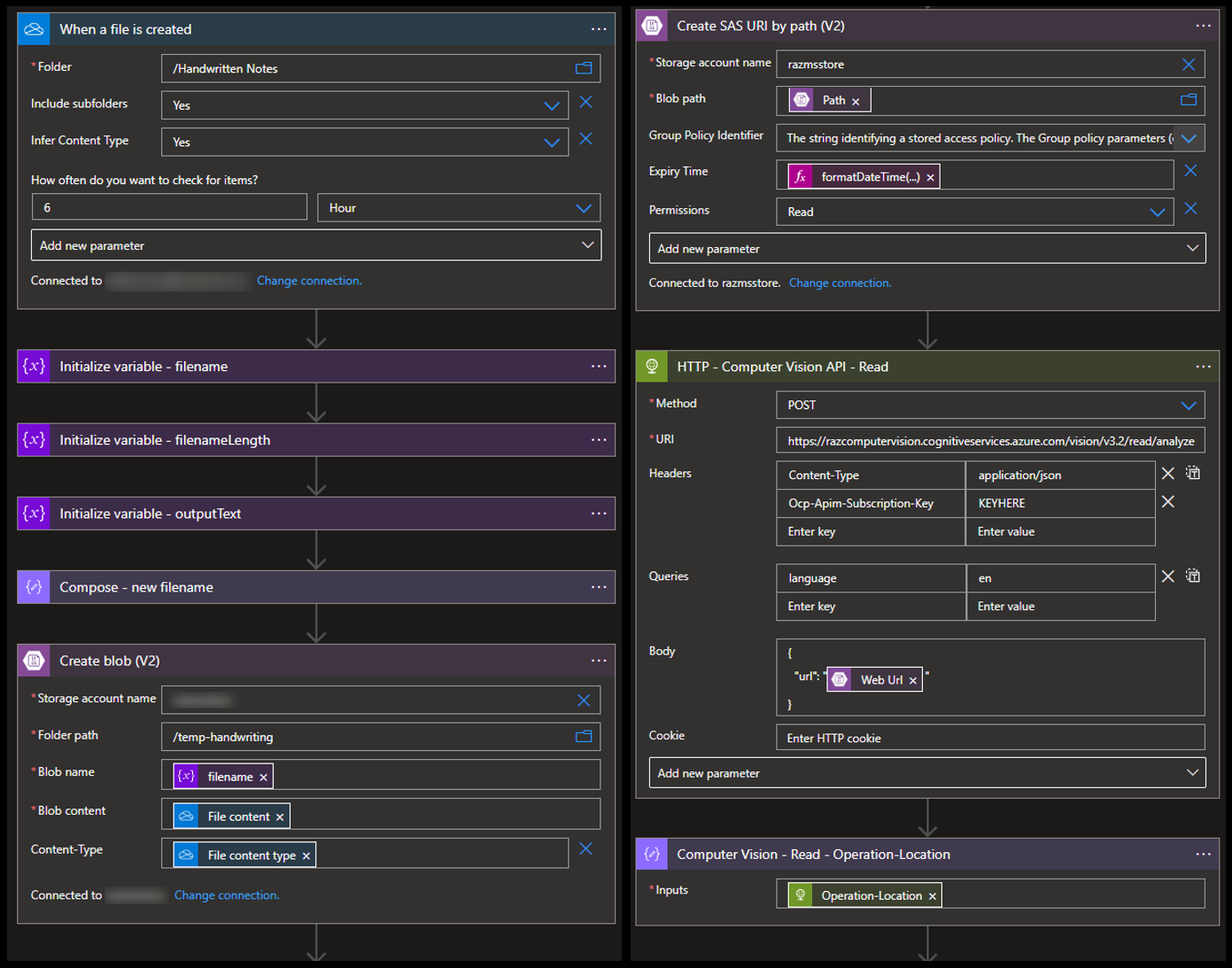

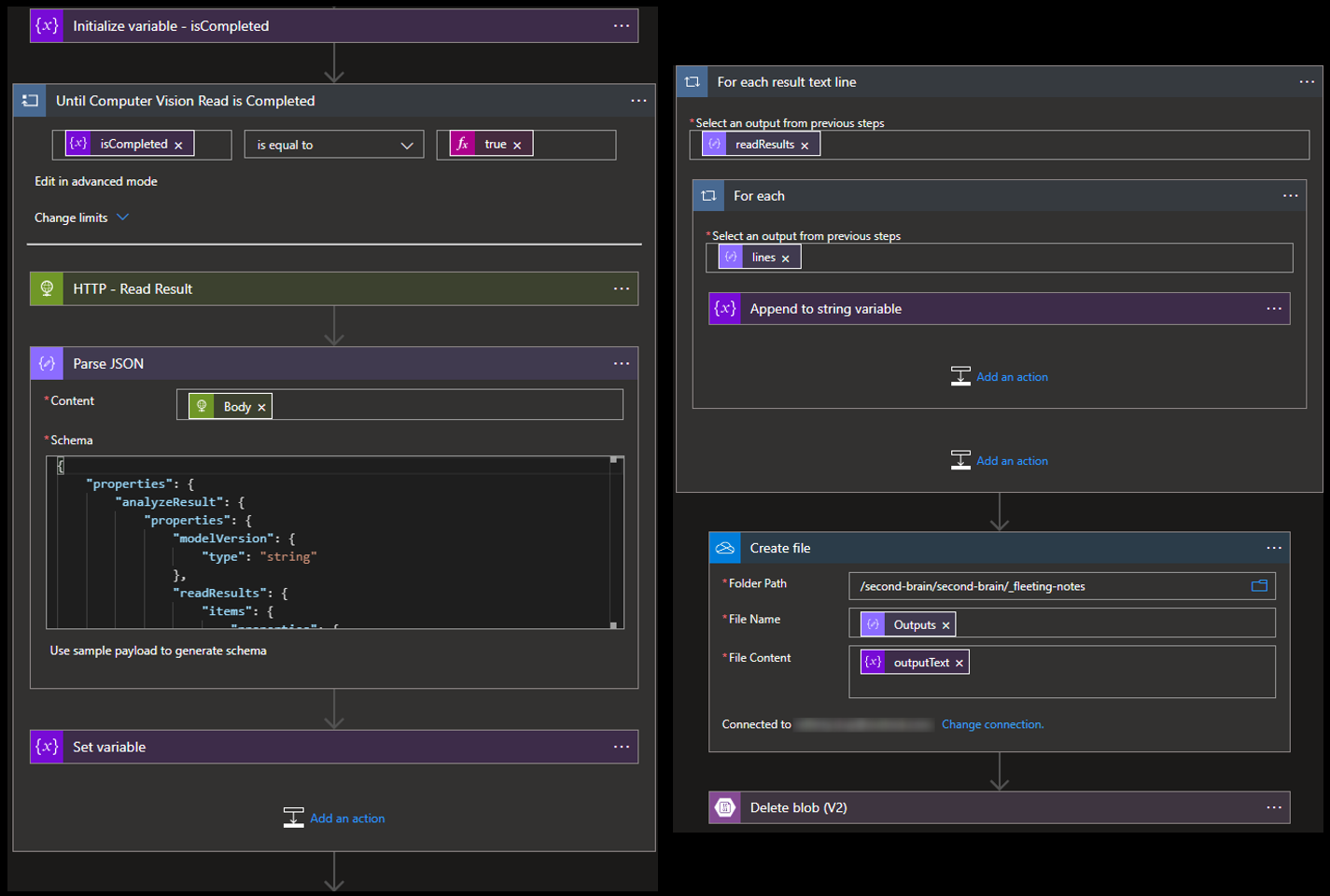

To implement this, I used the Read API to recognize JPEG, PNG, BMP, PDF, and TIFF files. Since OneDrive whiteboard and document scans are saved in PDF, the Computer Vision API is the perfect fit for the handwriting scan formats that I expect.

The Read API…

- Takes a URL as input

- Returns a response with

Operation-Locationkey/value pair in its header. This is a GET REST API URL that will eventually return the recognized text in aJSONresult.

Putting it together with Azure Logic Apps

Azure Logic Apps is a low-code/no-code cloud-native workflow automation service. Using this service, I could implement this solution quickly (writing this blog took longer).

As seen in the image at the top of this post, a new note is now ready for me to clean (fix accuracy, add tags and links, etc.) and reorganize when I’m back on my desktop.

Try it out!

If you want to try it out, you may quickly deploy this logic app from this GitHub Repo.